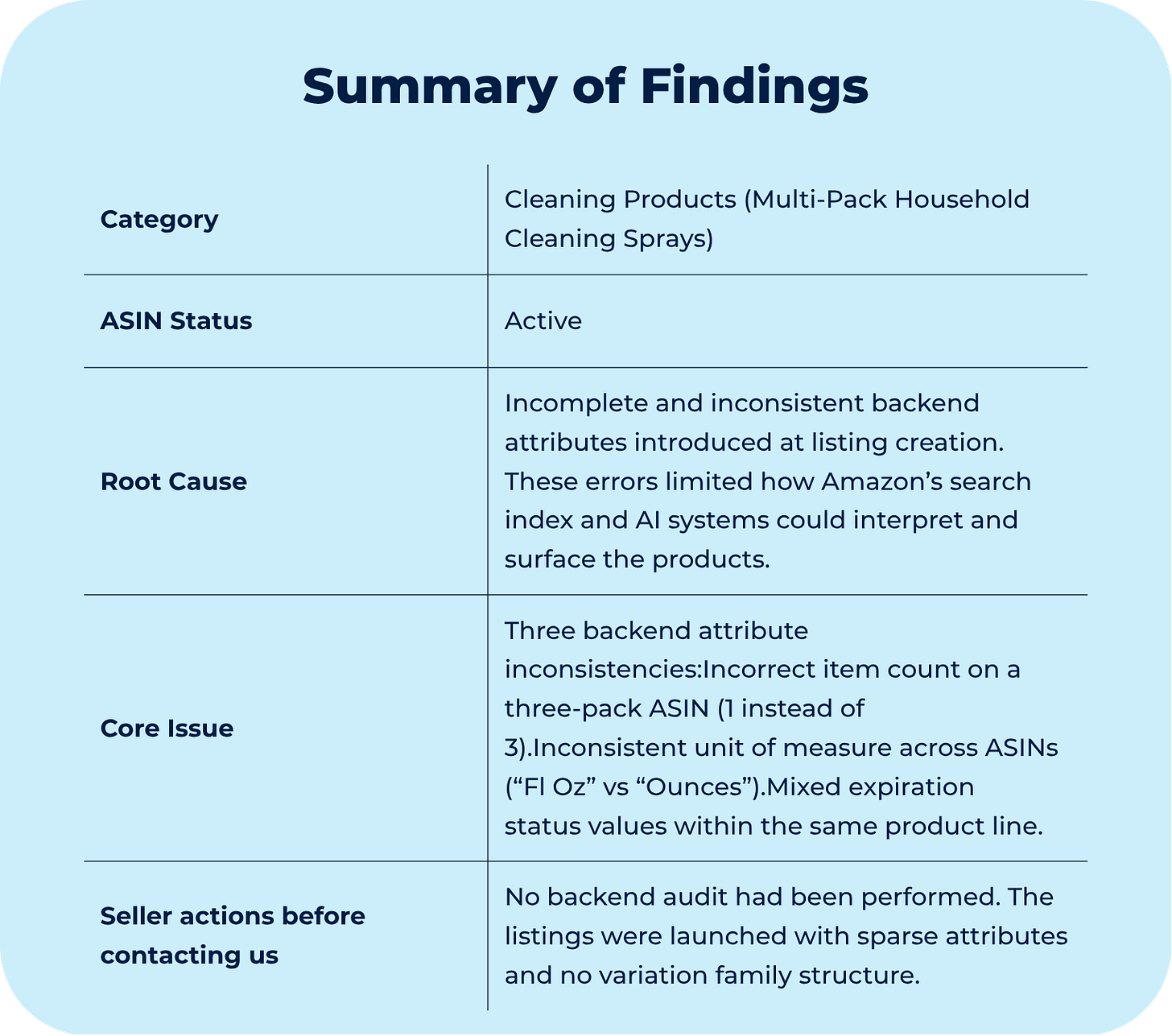

Case #015 - ⚠️3 silent catalog errors killing Amazon sales

These cleaning listings were live, stocked, and ready to sell, but there were three reasons why nobody found them.

If you’re new here, welcome.

If you’ve been reading for a while, thank you for being here.

Each week, we break down one real Amazon case from the field. Not to share tactics, but to decode how Amazon’s system actually behaves and what to do when it breaks.

All past cases live in a single searchable archive, built to help you identify recurring patterns across time.

Context

The products were live. The sales were not.

A seller from a cleaning product brand came to us with a straightforward request: the products were live on Amazon, but sales were not moving at the pace they were expecting.

This time, there was no suppression event, no ranking crash, no policy flag to investigate.

The listings were simply underperforming, and the seller wanted to do everything possible to increase visibility.

This turned out to be a two-part case. The first part involved all the ASINs in the catalog: every listing needed an AI Listing Backend Enhancement, our optimization service designed to improve how Rufus and COSMO read, index, and rank a product. The second part involved a subset of those ASINs: several products were standalone listings that should have been grouped together as a variation family, and that structure needed to be built correctly before the optimization work could be fully applied.

For the record, the process involves two parallel tracks: front-and-back-end content optimization built around semantic SEO relevance rather than keyword density, improving the content of the listings to match how customers actually search, and running a full audit of the backend fields that Amazon’s system reads but sellers rarely check.

That audit is where the case got interesting. What started as a routine optimization job uncovered three backend errors that had been there since the products launched, these errors never generated an issue, never triggered a suppression, and had simply been encoding incorrect information into the catalog record.

Before any variation family could be built, those needed to be resolved.

Diagnostic

What was actually blocking them

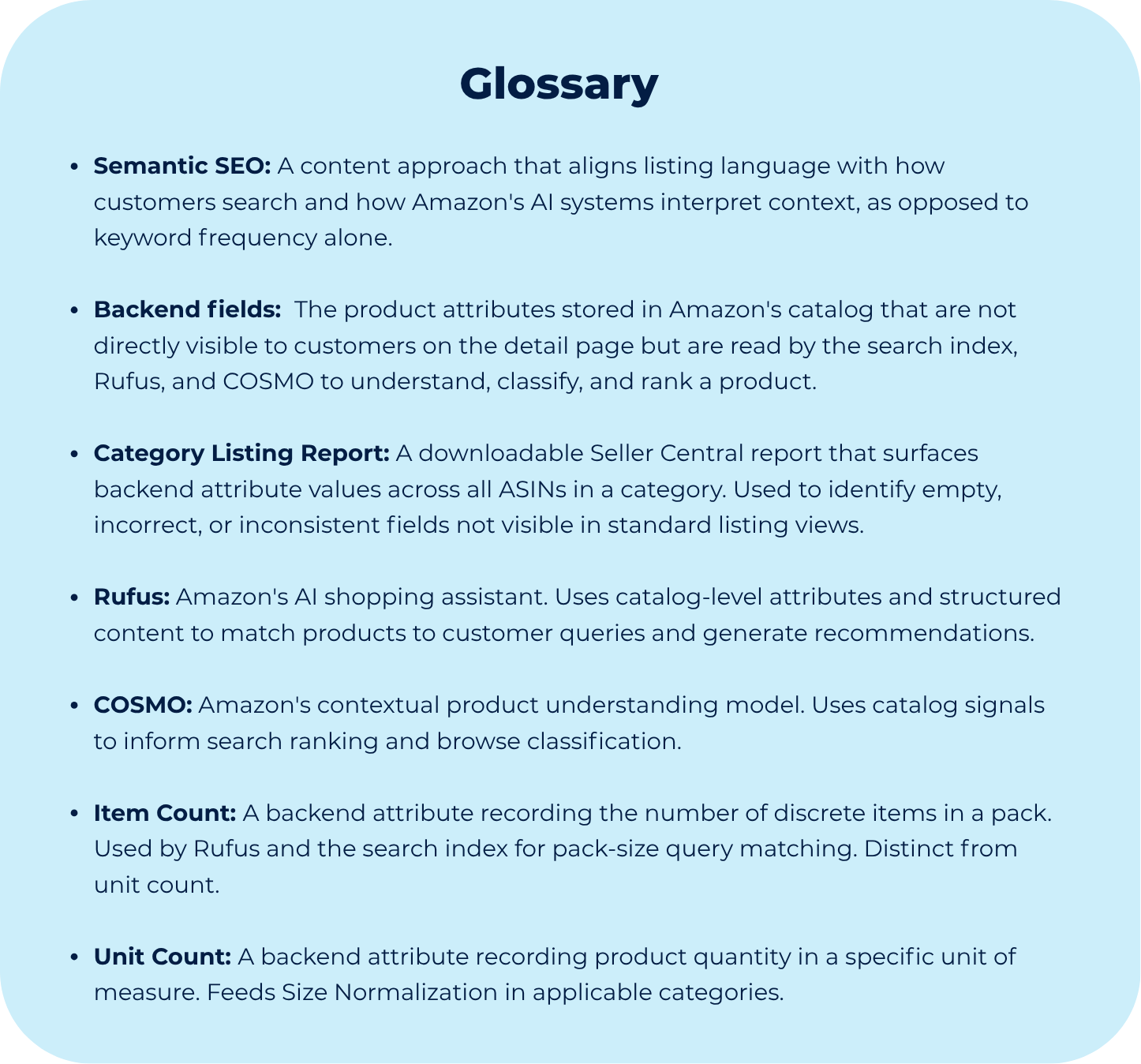

To understand why those three errors mattered, it helps to understand how Amazon decides which products to surface in the first place. Amazon places listing quality and completeness second on its search ranking hierarchy, behind only sales velocity. The practical implication: before a product can earn velocity, it has to give the system enough information to surface it accurately.

A catalog that is thin at the backend attribute level (not just light on copy) starts every query at a disadvantage.

That was part of the reason these products were underperforming. The listings had been created without a backend audit. The content was sparse, key attributes were empty or incorrect, and the system had no variation structure to consolidate the catalog around a single detail page. Individually, each gap was small. Together, they added up to a system that did not have enough information to rank confidently.

The AI Listing Backend Enhancement addresses this across two layers of Amazon’s discovery infrastructure.

The first is the traditional search index. Attributes like item count, unit of measure, and product type are indexed directly. Title and bullet copy are not substitutes for these fields.

For example, a query for “3 pack cleaning spray” is matched against the item count attribute and considers the title. If that field says 1, the ASIN does not match the query, regardless of what the listing says.

The second is the AI context layer. Rufus and COSMO construct their understanding of a product from catalog data. When a customer asks Rufus for a product recommendation, it surfaces products for which it has sufficient structured context to generate a confident answer. So, a thin or inconsistent backend record does not produce a negative signal, it produces an absence of signal. And absence disadvantages the product in every recommendation.

With that in mind, when we did the category listing report audit (standard step in the service process), to see which fields were empty, misaligned, or inaccurate, we found three errors introduced at listing creation:

Item count: The three-pack ASIN recorded an item count of 1. This is the field pack-size queries resolve against. The error was silent; the listing had been live with this value since launch.

Unit of measure: One ASIN used “Fl Oz” for unit count while the others used “Ounces.” In product types subject to Size Normalization, the displayed size string is derived from this attribute -- not from the listing copy. Inconsistent unit types across children that share a variation family produce inconsistent size labels in the variation selector.

Expiration status: Expiration flags were mixed across ASINs in the same product line. As structurally identical products, they should carry the same value. Inconsistency here creates ambiguity in the product type record.

None of these caused a processing error or a suppression. The system accepted all three and built the catalog record around them. That is precisely what makes them worth finding before they are locked into a variation family at formation.

Working with a catalog that underperforms without a clear system-level cause?

AI Listing Backend Enhancement addresses both the content and attribute layers that determine how Amazon’s algorithms read and rank your products. Learn more about how the service works.

Though Process

How we decided what to fix first

Knowing what was broken is only half the equation. The other half is deciding what to fix first and why, because in catalog work, sequence matters as much as the fix itself.

When a product underperforms without a visible system event, the first instinct is usually to look at the listing copy: the title, the bullets, the images. These are accessible and easy to evaluate. They are also not where the problem usually lives.

The category listing report shifts the diagnostic to the attribute layer—the fields that Rufus, COSMO, and the search index actually read. This report runs at the start of every AI LBE engagement because it shows what the system sees, not what the listing looks like. Those are not always the same thing.

This is exactly the type of report we analyze at the start of every catalog audit:

To understand why this matters, consider Amazon’s own ranking criteria, listing quality and completeness second, right behind sales velocity.

That placement matters because you cannot earn velocity from a listing that the system does not fully understand.

This is why backend fields that seem minor are worth filling.

For example, expiration status does not change what the customer sees, but it contributes to the completeness signal, or Alternative Keywords, which are invisible on the detail page, but they give Rufus the context it needs to recommend a product for queries the seller never thought to target.

Each field is a small signal. Together, they determine whether the system has a full picture of the product or a partial one.

There is also a protection angle that often goes unnoticed. Empty backend fields are fields anyone with catalog access can write to. A hijacker or unauthorized contributor can populate those attributes with incorrect values, and the seller may not catch it until the damage is done. Filling those fields accurately is not just optimization, it is also how you close that door.

The three attribute inconsistencies were not what was causing the underperformance. They were found because the audit was run systematically. Without it, they would have been carried straight into the variation family.

That matters because structural attributes are written into the catalog record at formation. Fixing them after the family is live is harder and riskier than fixing them before. The audit is what makes that possible.

The broader logic is simple: low velocity comes from low visibility, low visibility comes from an incomplete catalog, and an incomplete catalog is almost always the result of a listing that was never properly audited at launch.